Integrating with AWS Services#

So far, our OSToy application has functioned independently without relying on any external services. While this may be nice for a workshop environment, it's not exactly representative of real-world applications. Many applications require external services like databases, object stores, or messaging services.

In this section, we will learn how to integrate our OSToy application with other AWS services, specifically AWS S3 Storage. By the end of this section, our application will be able to securely create and read objects from S3.

To achieve this, we will use the Amazon Controller for Kubernetes (ACK) to create the necessary services for our application directly from Kubernetes. We will utilize IAM Roles for Service Accounts (IRSA) to manage access and authentication.

To demonstrate this integration, we will use OSToy to create a basic text file and save it in an S3 Bucket. Finally, we will confirm that the file was successfully added and can be read from the bucket.

Amazon Controller for Kubernetes (ACK)#

The Amazon Controller for Kubernetes (ACK) allows you to create and use AWS services directly from Kubernetes. You can deploy your applications, including any required AWS services directly within the Kubernetes framework using a familiar structure to declaratively define and create AWS services like S3 buckets or RDS databases.

In order to illustrate the use of the ACK on ROSA, we will walk through a simple example of creating an S3 bucket, integrating that with OSToy, upload a file to it, and view the file in our application. Interestingly, this part of the workshop will also touch upon the concept of granting your applications access to AWS services (though that is worthy of a workshop of its own).

IAM Roles for Service Accounts (IRSA)#

To deploy a service in your AWS account our ACK controller will need credentials for those AWS services (or S3 in our case). There are a few options for doing so, but the recommended approach is to use IAM Roles for Service Accounts (IRSA) that automates the management and rotation of temporary credentials that the service account can use. As stated on the ACK documentation page:

Instead of creating and distributing your AWS credentials to the containers or using the Amazon EC2 instance’s role, you can associate an IAM role with a Kubernetes service account. The applications in a Kubernetes pod container can then use an AWS SDK or the AWS CLI to make API requests to authorized AWS services.

Summarized, it is an AWS feature that enables you to assign IAM roles directly to Kubernetes service accounts.

To get the credentials, pods receive a valid OIDC JSON web token (JWT) and pass it to the AWS STS AssumeRoleWithWebIdentity API operation in order to receive IAM temporary role credentials.

The mechanism behind IRSA/STS in ROSA relies on the EKS pod identity mutating webhook which modifies pods that require AWS IAM access. Since we are using ROSA w/STS this webhook is already installed.

Note

Using IRSA allows us to adhere to the following best practices:

- Principle of least privilege - We are able to create finely tuned IAM permissions for AWS roles that only allow the access required. Furthermore, these permissions are limited to the service account associated with the role and therefore only pods that use that service account have access.

- Credential Isolation - a pod can only retrieve credentials for the IAM role associated with the service account that the pod is using and no other.

- Auditing - In AWS, any access of AWS resources can be viewed in CloudTrail.

Usually one would need to provision an OIDC provider, but since one is deployed with ROSA w/STS we can use that one.

Section overview#

To make the process clearer, here is an overview of the procedure we are going to follow. There are two main "parts".

- ACK Controller for the cluster - This allows you to create/delete buckets in the S3 service through the use of a Kubernetes Custom Resource for the bucket.

- Install the controller (in our case an Operator) which will also create the required namespace and the service account.

- Run a script which will:

- Create the AWS IAM role for the ACK controller and assign the S3 policy

- Associate the AWS IAM role with the service account

- Application access - Granting access to our application container/pod to access our S3 bucket.

- Create a service account for the application

- Create an AWS IAM role for the application and assign the S3 policy

- Associate the AWS IAM role with the service account

- Update application deployment manifest to use the service account

Install an ACK controller#

There are a few ways to do this, but we will use an Operator to make it easy. The Operator installation will also create an ack-system namespace and a service account ack-s3-controller for you.

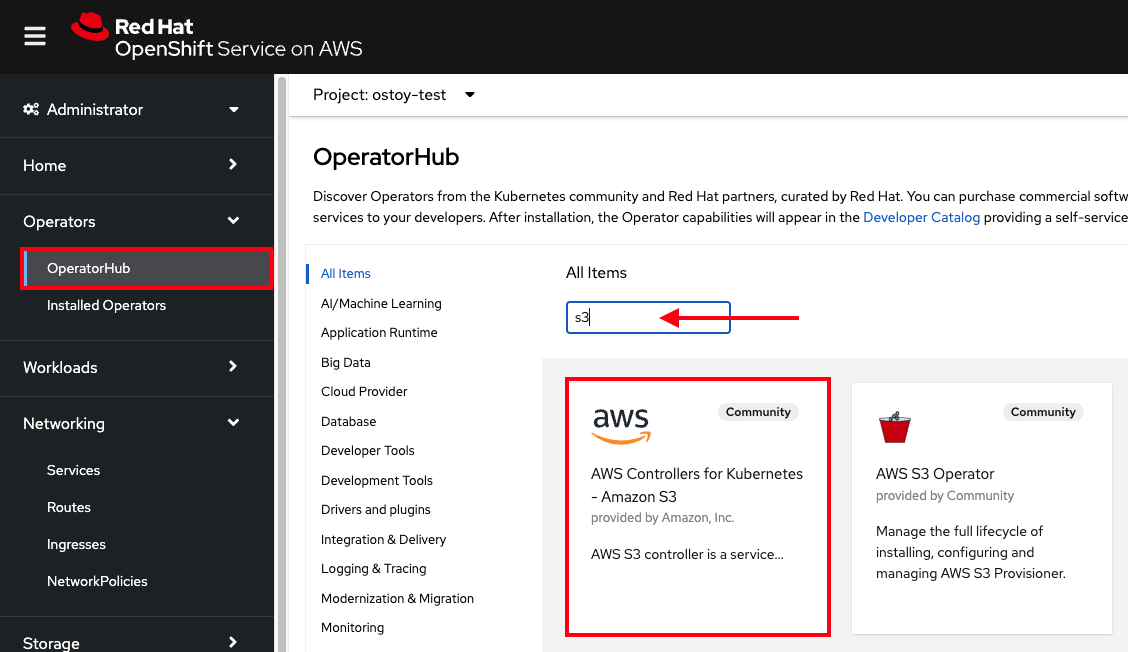

- Login to your OpenShift cluster's web console (if you aren't already).

- On the left menu, click on "Operators > OperatorHub".

-

In the filter box enter "S3" and select the "AWS Controller for Kubernetes - Amazon S3"

-

If you get a pop-up saying that it is a community operator, just click "Continue".

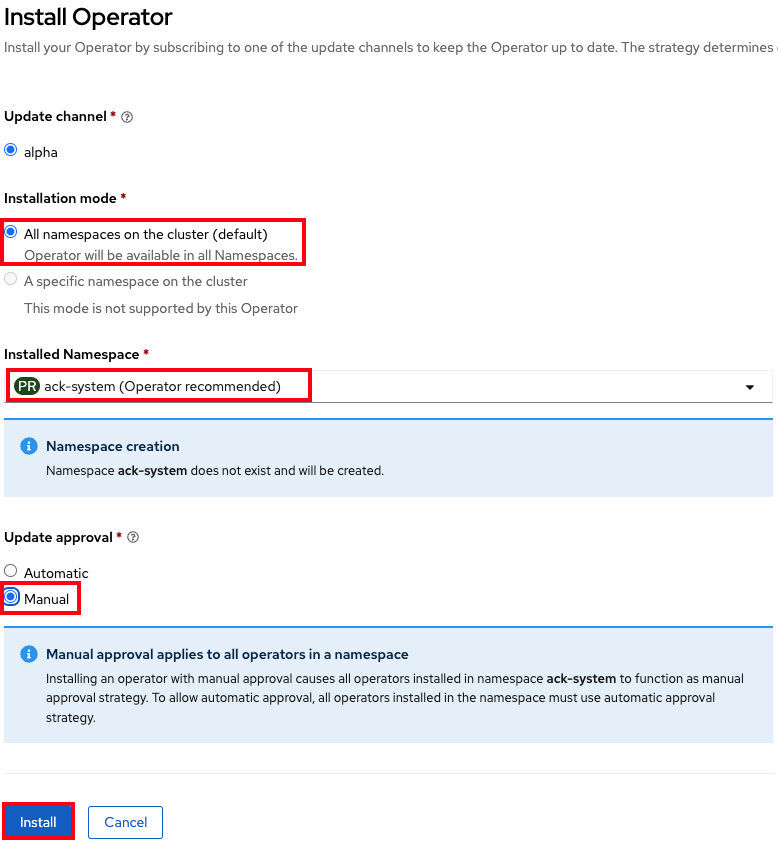

- Click "Install" in the top left.

- Ensure that "All namespaces on the cluster" is selected for "Installation mode".

- Ensure that "ack-system" is selected for "Installed Namespace".

-

Under "Update approval" ensure that "Manual" is selected.

Warning

Make sure to select "Manual Mode" so that changes to the Service Account do not get overwritten by an automatic operator update.

-

Click "Install" on the bottom. The settings should look like the below image.

-

Click "Approve".

- You will see that installation is taking place. The installation won't complete until the next step is finished. So please proceed.

Set up access for the controller#

Create an IAM role and policy for the ACK controller#

-

Run the setup-s3-ack-controller.sh script which automates the process for you, or use:

curl https://raw.githubusercontent.com/openshift-cs/rosaworkshop/master/rosa-workshop/ostoy/resources/setup-s3-ack-controller.sh | bashDon't worry, you will perform these steps later (for the application) but basically the script creates an AWS IAM role with an AWS S3 policy and associates that IAM role with the service account.

If you're not feeling risky then feel free to download it first and read the script before you run it.

-

When the script is complete it will restart the deployment which will update the service controller pods with the IRSA environment variables.

-

Confirm that the environment variables are set. Run:

You should see a response like:

-

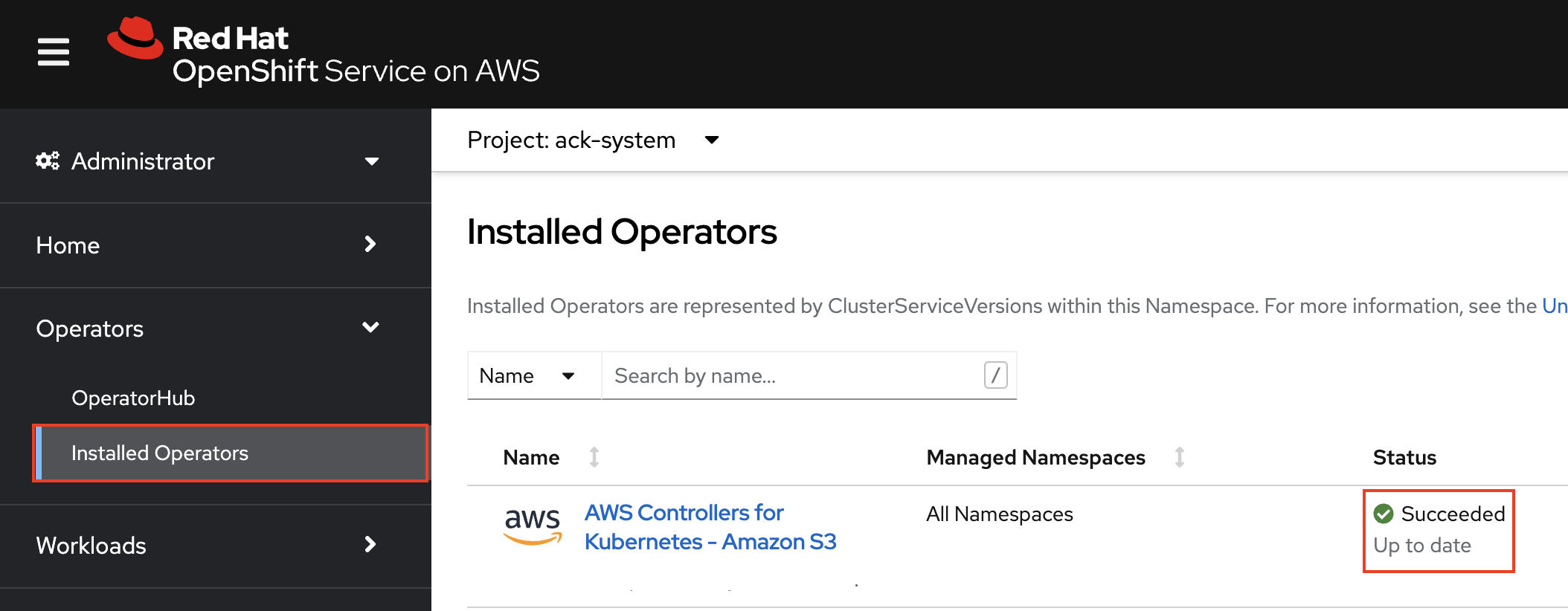

The ACK controller should now be set up successfully. You can confirm this in the OpenShift Web Console under "Operators > Installed operators".

We can now create/delete buckets through Kubernetes using the ACK. In the next section we will enable our application to use the S3 bucket that we will create.

Set up access for our application#

In this section we will create an AWS IAM role and service account so that OSToy can read and write objects to the S3 bucket that we will create.

Before starting, create a new unique project for OSToy.

Also, save the name of the namespace/project that OSToy is in to an environment variable to simplify command execution.

Create an AWS IAM role#

-

Get your AWS account ID.

-

Get the OIDC provider. Replace "<cluster-name>" with the name of your cluster.

-

Create the trust policy file.

cat <<EOF > ./ostoy-sa-trust.json { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "arn:aws:iam::${AWS_ACCOUNT_ID}:oidc-provider/${OIDC_PROVIDER}" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "${OIDC_PROVIDER}:sub": "system:serviceaccount:${OSTOY_NAMESPACE}:ostoy-sa" } } } ] } EOF -

Create the AWS IAM role to be used with your service account

Attach the S3 policy to the IAM role#

-

Get the S3 Full Access policy ARN

-

Attach that policy to the AWS IAM role

Create the service account for our pod#

-

Get the ARN for the AWS IAM role we created so that it will be included as an annotation when creating our service account.

-

Create the service account via manifest. Note the annotation to reference our AWS IAM role.

cat <<EOF | oc apply -f - apiVersion: v1 kind: ServiceAccount metadata: name: ostoy-sa namespace: ${OSTOY_NAMESPACE} annotations: eks.amazonaws.com/role-arn: "$APP_IAM_ROLE_ARN" EOFWarning

Do not change the name of the service account from "ostoy-sa". Otherwise you will have to change the trust relationship for the AWS IAM role.

-

Grant the service account the

restrictedrole. -

Confirm that is was successful.

You should see an output like the one below with the correct annotation:

Create an S3 bucket#

-

Create the S3 bucket using a manifest file. Run the command below or download it from here, then run it.

Warning

The OSToy application expects to find a bucket that is named based on the namespace/project that OSToy is in. Like "<namespace>-bucket". If you place anything other than the namespace of your OSToy project, this feature will not work. For example, if our project is "ostoy", the value for

namemust be "ostoy-bucket".You must also consider that because Amazon S3 requires that bucket names be globally unique, you must run OSToy in a project that is unique as well. Which is why we created a new project for this section.

-

Confirm the bucket was created.

Redeploy the OSToy app with the new service account#

-

Deploy the microservice.

-

Deploy the frontend.

oc apply -f https://raw.githubusercontent.com/openshift-cs/rosaworkshop/master/rosa-workshop/ostoy/yaml/ostoy-frontend-deployment.yaml

-

We now need to run our pod with the service account we created. Patch the

ostoy-frontenddeployment to add it. -

In effect we are making our deployment manifest look like the example below by specifying the service account.

-

Give it a minute to update the pod.

Confirm that the IRSA environment variables are set#

When AWS clients or SDKs connect to the AWS APIs, they detect AssumeRoleWithWebIdentity security tokens to assume the IAM role. See the AssumeRoleWithWebIdentity documentation for more details.

As we did for the ACK controller we can use the following command to describe the pods and verify that the AWS_WEB_IDENTITY_TOKEN_FILE and AWS_ROLE_ARN environment variables exist for our application which means that our application can successfully authenticate to use the S3 service:

We should see a response like:

AWS_ROLE_ARN: arn:aws:iam::000000000000:role/ostoy-sa

AWS_WEB_IDENTITY_TOKEN_FILE: /var/run/secrets/eks.amazonaws.com/serviceaccount/token

See the bucket contents through OSToy#

Use our app to see the contents of our S3 bucket.

-

Get the route for the newly deployed application.

-

Open a new browser tab and enter the route from above. Ensure that it is using

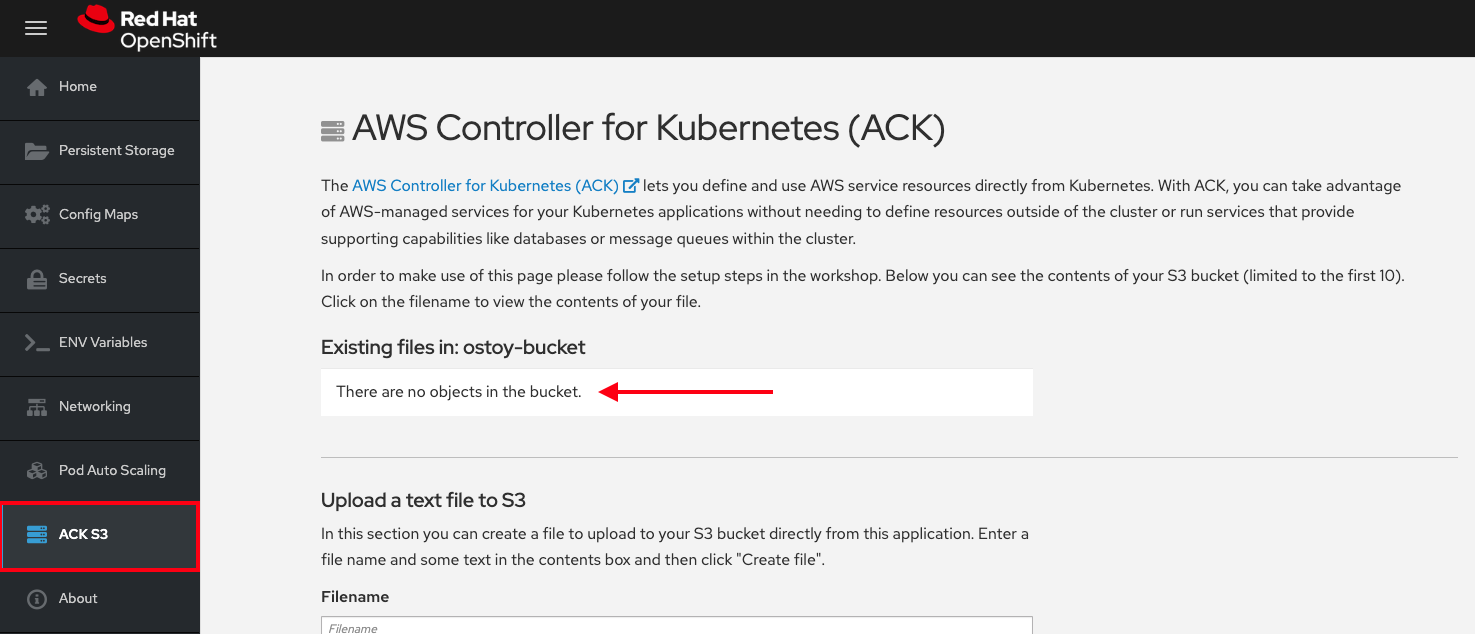

http://and nothttps://. - A new menu item will appear. Click on "ACK S3" in the left menu in OSToy.

-

You will see a page that lists the contents of the bucket, which at this point should be empty.

-

Move on to the next step to add some files.

Create files in your S3 bucket#

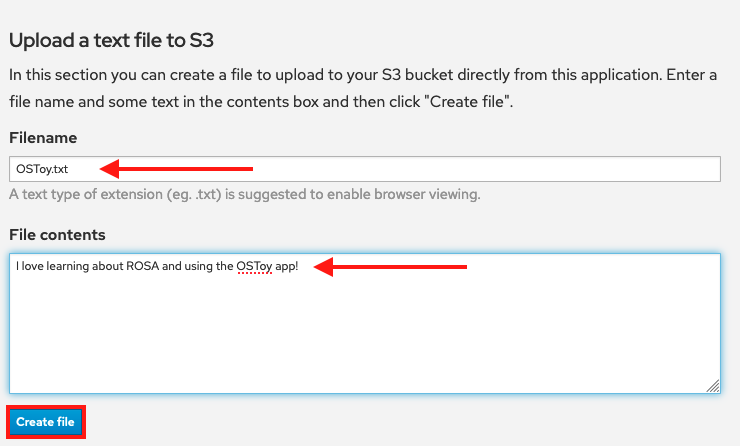

For this step we will use OStoy to create a file and upload it to the S3 bucket. While S3 can accept any kind of file, for this workshop we'll use text files so that the contents can easily be rendered in the browser.

- Click on "ACK S3" in the left menu in OSToy.

- Scroll down to the section underneath the "Existing files" section, titled "Upload a text file to S3".

- Enter a file name for your file.

- Enter some content for your file.

-

Click "Create file".

-

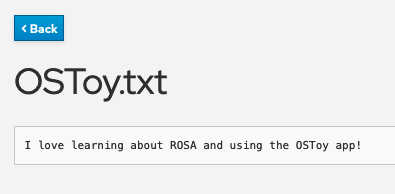

Scroll up to the top section for existing files and you should see your file that you just created there.

-

Click on the file name to view the file.

-

Now to confirm that this is not just some smoke and mirrors, let's confirm directly via the AWS CLI. Run the following to list the contents of our bucket.

We should see our file listed there: